Performing Objective Prioritization

Much of product management is prioritization. Which product to build; which feature to develop; which critical launch items to deliver. A prioritized list is critical—ideally sorted by some form of business value. Using an objective algorithm is the best approach.

Product leaders must choose constantly from a long list of reasonable items for which they have limited resources. My Quick Prioritization method is an easy way to decide top-priority items.

Many teams try to add more to any proposed rating scheme, particularly hard revenue and cost estimates. But can you? How much revenue will the capability generate? Who knows?

How accurate are your estimates? Because most teams report they are off by as much as 100%. What good are wildly inaccurate estimates? Not much. [tweet this]

Many prioritization schemes (including mine) suffer from being subjective rather than objective. Particularly if you add weights to each factor: “column B is worth 40 points and column C is worth 20 points.” Before you know it, your team is arguing the math; they arguing the values of the columns instead of the importance of the rows.

Whenever you have a 1-to-5 star system or a 1-to-10 sliding scale, you end up arguing why you chose ‘3’ instead of ‘4’—and you usually can’t remember why you specified it.

That’s why we’ve stopped using a 5-star system in our software. In releases prior to December 2016, we used a 5-star system for product opportunity scoring, competitive threat level, and more. Now we use a series of true/false statements.

For example, defining the threat level of a competitor is helpful when you’re deciding if you should respond to a competitive feature. A competitor with a threat level of 10 (out of 100) can safely be ignored; a competitor with a threat level of 90 warrants your serious attention. How do you determine a threat level? While we used to ask you to rate the competitor with one to five stars, today’s technique is a series of “yes or no” questions.

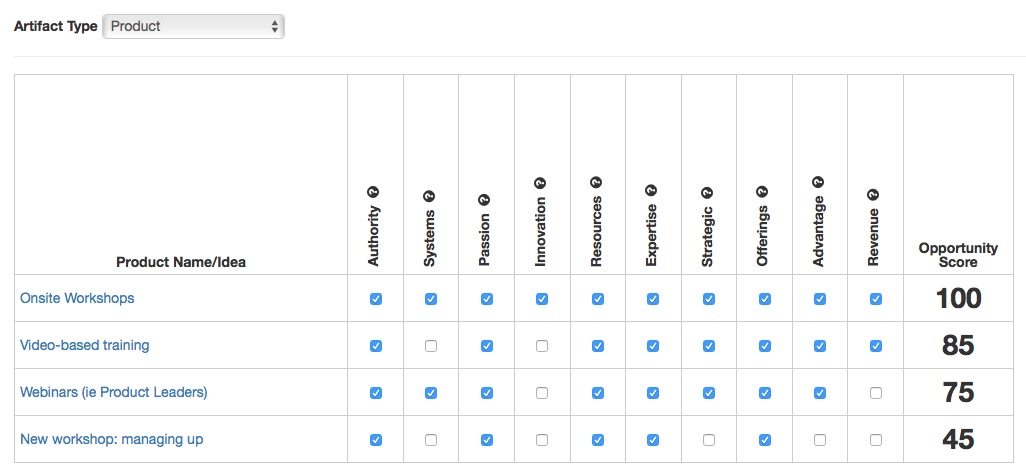

For each type of artifact—product, stories, competitors, and customer requests—use a tool to compare key strategic aspects. Here's a screen shot from Under10 Playbook to illustrate the comparison of products.

These true or false questions are much easier to answer accurately.

- Do we have the authority (or brand awareness) to offer this?

- Is this a new way to solve a customer problem?

- Do most of your customers need this capability?

- Will this capability result in new revenue?

You don’t want to find yourself arguing the merits of your decision when you can’t remember why you chose one result over another.

Whether rating a competitor or a feature or a product idea, objective decisions beat subjective ones every time. [tweet this]